- Blog

- Deltagraph 7 windows 10

- Card games spider solitaire free download

- Us postal service and mail forward

- Survivethis interactive conan exiles map

- Double sided foam tape home depot

- Play ratty catty online free

- Download film semi jepang blogspot

- Nate newton busted rockwall

- Remo recover 4-0 license key

- Elijah bible study melissa spoelstra

- Flipnote studio 3d download code 2017

- Degrees of freedom calculator for two samples

- Online youtube to mp3 converter

- Superfighters unblocked games at school full screen

- Pre order skin starcraft remastered

- Xenoverse 2 mod installer character select error

- Charter spectrum tv silver channel list

- Bloodborne for pc torrent

- Word art text generator online

- Adobe photoshop vs lightroom vs elements

- Youtube mp3 conconventer gratuit en ligne

- Example of a written annual household budget

- Epley maneuver for dizziness vertigo

- List of uncensored free porn sites

- Mobirise to wordpress plugin

- Best youtube converter to mp3 app

- Vehicles coloring pages airplane

- Create pdf with digital signature

- Western united states doppler radar

- Us map population density

- Ds 160 form

- Drexel furniture catalog 1950-s pdf

- Dolby home theater windows 8-1 download

- Google fiber chat

- Military coordinate scale and protractor

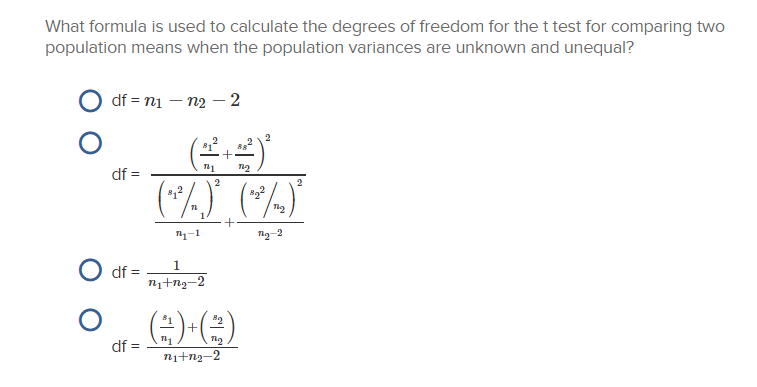

Calculating the degrees of freedom is often the sample size minus the number of parameters you’re estimating: The degrees of freedom formula is straightforward. In an F-test, the degrees of freedom are the number of groups minus one for the numerator and the total number of observations minus the number of groups for the denominator.

In a t-test, for example, the degrees of freedom are the number of observations minus the number of parameters estimated from the data (usually one for the mean). In general, as the number of degrees of freedom increases, the accuracy of the estimate or test statistic improves.ĭegrees of freedom are used in many statistical tests, including t-tests, F-tests, and chi-square tests. In other words, degrees of freedom are the number of values in a calculation that can be varied without affecting the final outcome of the calculation.ĭegrees of freedom are important in statistical inference because they determine the accuracy of statistical estimates and test statistics. Overview: What are degrees of freedom?ĭegrees of freedom refer to the number of values in a statistical calculation that are free to vary after certain constraints have been placed on the data. If you run out of your money, you can either get more by collecting more data or spend less by asking for less computations. The amount of your DF is determined by the number of data points you have. Different statistics require differing amounts of money. You can think of DF as statistical money that you can spend to compute certain statistics. Let’s learn more about how to compute and use DF. The more you want to compute, the more data and information you need. You may notice that the F-test of an overall significance is a particular form of the F-test for comparing two nested models: it tests whether our model does significantly better than the model with no predictors (i.e., the intercept-only model).Degrees of Freedom (DF) can be thought of as the amount of information you have to compute certain statistics. The test statistic follows the F-distribution with (k 2 - k 1, n - k 2)-degrees of freedom, where k 1 and k 2 are the numbers of variables in the smaller and bigger models, respectively, and n is the sample size. You can do it by hand or use our coefficient of determination calculator.Ī test to compare two nested regression models. With the presence of the linear relationship having been established in your data sample with the above test, you can calculate the coefficient of determination, R 2, which indicates the strength of this relationship. The test statistic has an F-distribution with (k - 1, n - k)-degrees of freedom, where n is the sample size, and k is the number of variables (including the intercept). We arrive at the F-distribution with (k - 1, n - k)-degrees of freedom, where k is the number of groups, and n is the total sample size (in all groups together).Ī test for overall significance of regression analysis. Its test statistic follows the F-distribution with (n - 1, m - 1)-degrees of freedom, where n and m are the respective sample sizes.ĪNOVA is used to test the equality of means in three or more groups that come from normally distributed populations with equal variances. All of them are right-tailed tests.Ī test for the equality of variances in two normally distributed populations. P-value = 2 × min, we denote the smaller of the numbers a and b.)īelow we list the most important tests that produce F-scores. Right-tailed test: p-value = Pr(S ≥ x | H 0) Left-tailed test: p-value = Pr(S ≤ x | H 0) In the formulas below, S stands for a test statistic, x for the value it produced for a given sample, and Pr(event | H 0) is the probability of an event, calculated under the assumption that H 0 is true: It is the alternative hypothesis that determines what "extreme" actually means, so the p-value depends on the alternative hypothesis that you state: left-tailed, right-tailed, or two-tailed. More intuitively, p-value answers the question:Īssuming that I live in a world where the null hypothesis holds, how probable is it that, for another sample, the test I'm performing will generate a value at least as extreme as the one I observed for the sample I already have?

It is crucial to remember that this probability is calculated under the assumption that the null hypothesis H 0 is true! Formally, the p-value is the probability that the test statistic will produce values at least as extreme as the value it produced for your sample.